So, the LHC is up and running, and has finally begun producing collisions! Seventh and Eighth-year high energy physics graduate students are celebrating (and working hard) around the world at the prospect that they might actually get their PhDs after all!

Oh, and the world hasn't been destroyed yet! For those of you who are still worried by the thought that the LHC will create a black hole and collapse the Earth in on itself, here's a web site with up-to-date information:

Has the large hadron collider destroyed the world yet?

Saturday, December 5, 2009

Thursday, November 12, 2009

Miracles

I like to think. A lot. Maybe too much.

Friends and family members always like to mention that I live in "another world". My in-laws, who speak primarily in Chinese, often like to stop mid-conversation to ask me if I understand what they're saying. My response is usually, "I wasn't listening".

Lest you think I'm being rude, it is very exhausting trying to listen to a foreign language for long periods of time. But that is not what this post is supposed to be about, so lets move on...

So, in those moments when my mind wanders, it takes me off into many directions. Sometimes they are simple thoughts like, "I wonder if I can play video games right now without my wife getting mad at me", or "How do we get the manic dog to stop barking?"

When I'm driving, I often marvel at the human brain and what it is capable of. We take for granted the complex motor skills that are required to make a simple turn. We learn the skills and practice to the point where we don't have to think any more. We think about turning and the practiced movements of our arms, feet, neck and eyes do everything for us.

I remember reading about an experiment where electrodes were placed in a monkey's brain, which transmitted signals to a robotic arm. With a little practice, the monkey could control the arm and use it almost as another limb- using the arm to grab treats and feed itself. I wonder if this skill is related to the ability to use tools that humans and monkeys have developed as an evolutionary boost.

But that is by no means the most amazing thing I've seen.

Watching a child grow is an amazing experience. It is definitely something that might make you believe in miracles. And my daughter truly is a miracle.

When she was just a few days old, I'd watch her sleeping and see the rise and fall of her chest in time with her breathing. I'd marvel at the thought of how much had to be made just right to keep that motor running. I used to have a small fear in the back of my mind that she'd suddenly stop. That sounds morbid, but I bet every parent has had the same thought at some point or another.

After all, if you really think about it, you might realize how complex the human body is. Modern science has worked tirelessly on understanding how life works, and we have only just begun. If a baby were engineered by humans, it almost certainly wouldn't work.

But my baby can not only breathe. She can see, feel, and hear. She can learn. Every moment, she observes and learns about pieces of the world around her. She practices the skills she will eventually need in order to function as a human being- all without prodding or any incentive other than her own basic need to do so.

Through years of scientific study aimed at understanding the processes my daughter has gone through, we have learned many things. In many cases, we have learned pieces of information that we already knew in some way or another. We have learned that children need love and attention in just the same way that they need food and water. We have learned time and time again that what is best for us just happens to be what nature has provided for us all along.

In my world, miracles are everywhere. They don't have to be divinely summoned to be so.

I'd better stop before I make someone throw up.

Friends and family members always like to mention that I live in "another world". My in-laws, who speak primarily in Chinese, often like to stop mid-conversation to ask me if I understand what they're saying. My response is usually, "I wasn't listening".

Lest you think I'm being rude, it is very exhausting trying to listen to a foreign language for long periods of time. But that is not what this post is supposed to be about, so lets move on...

So, in those moments when my mind wanders, it takes me off into many directions. Sometimes they are simple thoughts like, "I wonder if I can play video games right now without my wife getting mad at me", or "How do we get the manic dog to stop barking?"

When I'm driving, I often marvel at the human brain and what it is capable of. We take for granted the complex motor skills that are required to make a simple turn. We learn the skills and practice to the point where we don't have to think any more. We think about turning and the practiced movements of our arms, feet, neck and eyes do everything for us.

I remember reading about an experiment where electrodes were placed in a monkey's brain, which transmitted signals to a robotic arm. With a little practice, the monkey could control the arm and use it almost as another limb- using the arm to grab treats and feed itself. I wonder if this skill is related to the ability to use tools that humans and monkeys have developed as an evolutionary boost.

But that is by no means the most amazing thing I've seen.

Watching a child grow is an amazing experience. It is definitely something that might make you believe in miracles. And my daughter truly is a miracle.

When she was just a few days old, I'd watch her sleeping and see the rise and fall of her chest in time with her breathing. I'd marvel at the thought of how much had to be made just right to keep that motor running. I used to have a small fear in the back of my mind that she'd suddenly stop. That sounds morbid, but I bet every parent has had the same thought at some point or another.

After all, if you really think about it, you might realize how complex the human body is. Modern science has worked tirelessly on understanding how life works, and we have only just begun. If a baby were engineered by humans, it almost certainly wouldn't work.

But my baby can not only breathe. She can see, feel, and hear. She can learn. Every moment, she observes and learns about pieces of the world around her. She practices the skills she will eventually need in order to function as a human being- all without prodding or any incentive other than her own basic need to do so.

Through years of scientific study aimed at understanding the processes my daughter has gone through, we have learned many things. In many cases, we have learned pieces of information that we already knew in some way or another. We have learned that children need love and attention in just the same way that they need food and water. We have learned time and time again that what is best for us just happens to be what nature has provided for us all along.

In my world, miracles are everywhere. They don't have to be divinely summoned to be so.

I'd better stop before I make someone throw up.

Sunday, November 1, 2009

Physicists are Everywhere

For those of you who don't really know what goes on in the world of experimental physics, let me tell you about what I've been doing the last couple weeks.

So, I've posted about the KATRIN project before, but here's a recap: KATRIN is a project that aims to directly measure the neutrino mass by examining the beta-decay spectrum of tritium. The specific part I'm working on is the rear section, which is an important calibration piece of the experiment. For the output of the experiment to make sense, we need to know precisely how much tritium there is in the system at any given time. To accomplish this, we use a detector in the back wall to count the electrons that don't make it through the main spectrometer.

Now, I've been examining one potential problem with this picture- one having to do with electron scattering. You see, the beta decay electrons don't just travel around until they hit the detector- they can also scatter by interacting with the tritium in the column. These interactions make the electrons lose energy, and happen with more frequency when the tritium density increases. With less energy, the electrons are then less likely to be counted in the back end, and this introduces a systematic uncertainty into the measurement of the tritium density.

So, to explore the problem, I wrote a monte carlo program that shows the effect that electron scattering actually has. My program first randomly selects where the electron is emitted from, and at which angle. Then the program selects how far it gets before it scatters, and how much energy it loses. If the electron hasn't reached the wall, the process repeats until it does. Then, the program repeats the whole process again until it has simulated 100,000 electrons. Then, I ran my output through my professor's code, which does a similar thing with the detector design we're using. Altogether, these simulations show what we should expect as an output, should we see some variation in the tritium density, and gives a baseline for the systematic uncertainties we should expect. It also gives us some commentary on the feasibility of the detector design.

I have a point, but you'll have to read on.

At the beginning of baseball season every year, you can usually find some article online talking about computer algorithms that predict the outcomes of the coming season. These algorithms calculate how many games a team will win, how many runs they will score, their likelihood of making the playoffs, and much more. The way they do it is actually quite simple (in principle).

These algorithms merely take the rosters of each team, and uses each players' projected stats to calculate the likelihood of every possible outcome of any particular pitcher-batter match-up. Using these stats, the algorithms simulate every game of the season, one plate appearance at a time. They then repeat the algorithm about a thousand times to minimize uncertainties in random variations.

Hopefully you're starting to see a pattern. Here's another example:

Last fall, I watched a few episodes of a show called "Deadliest Warrior". The premise of the show is that they explore and analyze the weapons and fighting techniques of some of history's most famous warriors, and try to answer the question of who was more lethal. It's the perfect show for any guy who has ever sat around drinking with his buddies and asked the question, "Hey, if a samurai and a viking ever fought to the death, who would win?" The answer, of course- the samurai.

Anyway, so here's how the show went about answering this question: First, they invited experts and martial artists to showcase the weapons and techniques of each side, running tests on each weapon to determine its killing ability. Then, they put the data into a computer program that simulated the battle, blow by blow, a thousand times and tallied the wins for each side.

I'm not an expert in financial markets, but I'm pretty sure they do something similar there as well.

So, maybe my point is this: The work that goes on in the Physics world doesn't need to seem so distant and scary. There are many people out there who do very similar work in fields that are much more accessible to the general public.

As a matter of fact, my job is much easier than those that I highlighted earlier. My program is about 100 lines of Python code. Sports and battle simulations are much harder to do.

For example, those computer algorithms seem to predict the Yankees winning the world series every year. This year is the first since 2000 that they've been right.

...And I think we know what kind of track record the banking industry has...

So, I've posted about the KATRIN project before, but here's a recap: KATRIN is a project that aims to directly measure the neutrino mass by examining the beta-decay spectrum of tritium. The specific part I'm working on is the rear section, which is an important calibration piece of the experiment. For the output of the experiment to make sense, we need to know precisely how much tritium there is in the system at any given time. To accomplish this, we use a detector in the back wall to count the electrons that don't make it through the main spectrometer.

Now, I've been examining one potential problem with this picture- one having to do with electron scattering. You see, the beta decay electrons don't just travel around until they hit the detector- they can also scatter by interacting with the tritium in the column. These interactions make the electrons lose energy, and happen with more frequency when the tritium density increases. With less energy, the electrons are then less likely to be counted in the back end, and this introduces a systematic uncertainty into the measurement of the tritium density.

So, to explore the problem, I wrote a monte carlo program that shows the effect that electron scattering actually has. My program first randomly selects where the electron is emitted from, and at which angle. Then the program selects how far it gets before it scatters, and how much energy it loses. If the electron hasn't reached the wall, the process repeats until it does. Then, the program repeats the whole process again until it has simulated 100,000 electrons. Then, I ran my output through my professor's code, which does a similar thing with the detector design we're using. Altogether, these simulations show what we should expect as an output, should we see some variation in the tritium density, and gives a baseline for the systematic uncertainties we should expect. It also gives us some commentary on the feasibility of the detector design.

I have a point, but you'll have to read on.

At the beginning of baseball season every year, you can usually find some article online talking about computer algorithms that predict the outcomes of the coming season. These algorithms calculate how many games a team will win, how many runs they will score, their likelihood of making the playoffs, and much more. The way they do it is actually quite simple (in principle).

These algorithms merely take the rosters of each team, and uses each players' projected stats to calculate the likelihood of every possible outcome of any particular pitcher-batter match-up. Using these stats, the algorithms simulate every game of the season, one plate appearance at a time. They then repeat the algorithm about a thousand times to minimize uncertainties in random variations.

Hopefully you're starting to see a pattern. Here's another example:

Last fall, I watched a few episodes of a show called "Deadliest Warrior". The premise of the show is that they explore and analyze the weapons and fighting techniques of some of history's most famous warriors, and try to answer the question of who was more lethal. It's the perfect show for any guy who has ever sat around drinking with his buddies and asked the question, "Hey, if a samurai and a viking ever fought to the death, who would win?" The answer, of course- the samurai.

Anyway, so here's how the show went about answering this question: First, they invited experts and martial artists to showcase the weapons and techniques of each side, running tests on each weapon to determine its killing ability. Then, they put the data into a computer program that simulated the battle, blow by blow, a thousand times and tallied the wins for each side.

I'm not an expert in financial markets, but I'm pretty sure they do something similar there as well.

So, maybe my point is this: The work that goes on in the Physics world doesn't need to seem so distant and scary. There are many people out there who do very similar work in fields that are much more accessible to the general public.

As a matter of fact, my job is much easier than those that I highlighted earlier. My program is about 100 lines of Python code. Sports and battle simulations are much harder to do.

For example, those computer algorithms seem to predict the Yankees winning the world series every year. This year is the first since 2000 that they've been right.

...And I think we know what kind of track record the banking industry has...

Tuesday, September 29, 2009

Irrational Emotion

So, football season has been going for a couple weeks now, and as my wife can attest to, I think I've been a little too preoccupied over the weekends. I've been a little puzzled myself as to why.

Football never struck me as all that great a sport. I always thought there were too many players, too many positions, and definitely too many rules. I never liked how one player, the quarterback, can account for seemingly half of a team's success (and take all the credit). I especially despised those stupid touchdown celebrations. In baseball, acting like that after a hitting a home run would probably result in getting beamed in the hip by a fastball in your next at-bat.

But then I went to college and found out a fact that I hadn't even considered before- I suddenly had a team to root for. I followed my team's highs and lows (in that order) year after year until my head was about to explode.

Now I'm a little better versed in football terminology and have developed an appreciation for the game. After doing a quick search on wikipedia, I can tell you a little about the different positions. I can tell you the difference between the 4-3 and 3-4. I also notice a few basic things when I watch- like how bad things happen when you don't get to the quarterback and why good time management is so important.

That being said, I'm still a bit confused over a few things: What's the difference between a running back and a tail back? How do you tell a chop block from a crackback? Why are wide receivers the only ones with the attitude problems?

And I still get confused by lines like "great lead-blocking in the backfield." I also get fooled by play-action way too often. It's a good thing I'm not a linebacker because I have a habit of losing sight of the football.

And now, after a particularly gruesome loss by my once-promising team (for about the fifth year in a row), I'm pondering why I've once again decided to devote so much energy to this futile endeavor.

To answer that question, I'll refer to an experiment I recently learned about from the field of group psychology. In this experiment, participants were paired up and played several sequences of the prisoner's dilemma game through a computer interface (so they couldn't see each other). (If you're not familiar with the prisoner's dilemma, it is enough to know that each person is given the option of either cooperating or backstabbing the other participant).

What made this experiment interesting was that the participants were told one bit of information about their partners prior to playing. This bit of information could be one of several things, including race, gender, taste in music, favorite ice cream flavor, or just about anything else.

The experimenters found that people were much more likely to cooperate with their partners when the bit of information they were told showed similarities with themselves. In other words, they treated people better when they were of the same race, gender, or even liked the same ice cream.

Even more astonishing was the result of the next experiment. In this next experiment, participants played the prisoner's dilemma game once again, but this time, they were placed in random groups. The participants never met each other face-to-face, were aware that the groups were selected randomly, and never learned any information about their partners apart from which group they belonged to.

Despite the fact that these groups were formed randomly, the participants showed the same favoritism for those in the same group that they showed for those with the same race in the first experiment. In many cases they weren't even aware that they showed this favoritism.

What do we learn from this experiment? I think it has powerful implications concerning the nature of human existence. We underestimate the importance of our group memberships in our everyday lives. This experiment shows that we cannot help but identify ourselves as members of certain groups, regardless of whether or not the organization of those groups shows any sense of logic or reason. It is an interesting (and somewhat frightening) prospect.

So in the context of this experiment, I suppose it becomes perfectly natural to root for a team that represents your school or the area where you grew up. If you really think about it, those players really have very little in common with us fans (I've never seen a burly offensive lineman squeeze into a chair at one of my physics lectures), but I suppose I'm just looking for a reason to root for someone. Any reason will do.

That seems fine to me.

Football never struck me as all that great a sport. I always thought there were too many players, too many positions, and definitely too many rules. I never liked how one player, the quarterback, can account for seemingly half of a team's success (and take all the credit). I especially despised those stupid touchdown celebrations. In baseball, acting like that after a hitting a home run would probably result in getting beamed in the hip by a fastball in your next at-bat.

But then I went to college and found out a fact that I hadn't even considered before- I suddenly had a team to root for. I followed my team's highs and lows (in that order) year after year until my head was about to explode.

Now I'm a little better versed in football terminology and have developed an appreciation for the game. After doing a quick search on wikipedia, I can tell you a little about the different positions. I can tell you the difference between the 4-3 and 3-4. I also notice a few basic things when I watch- like how bad things happen when you don't get to the quarterback and why good time management is so important.

That being said, I'm still a bit confused over a few things: What's the difference between a running back and a tail back? How do you tell a chop block from a crackback? Why are wide receivers the only ones with the attitude problems?

And I still get confused by lines like "great lead-blocking in the backfield." I also get fooled by play-action way too often. It's a good thing I'm not a linebacker because I have a habit of losing sight of the football.

And now, after a particularly gruesome loss by my once-promising team (for about the fifth year in a row), I'm pondering why I've once again decided to devote so much energy to this futile endeavor.

To answer that question, I'll refer to an experiment I recently learned about from the field of group psychology. In this experiment, participants were paired up and played several sequences of the prisoner's dilemma game through a computer interface (so they couldn't see each other). (If you're not familiar with the prisoner's dilemma, it is enough to know that each person is given the option of either cooperating or backstabbing the other participant).

What made this experiment interesting was that the participants were told one bit of information about their partners prior to playing. This bit of information could be one of several things, including race, gender, taste in music, favorite ice cream flavor, or just about anything else.

The experimenters found that people were much more likely to cooperate with their partners when the bit of information they were told showed similarities with themselves. In other words, they treated people better when they were of the same race, gender, or even liked the same ice cream.

Even more astonishing was the result of the next experiment. In this next experiment, participants played the prisoner's dilemma game once again, but this time, they were placed in random groups. The participants never met each other face-to-face, were aware that the groups were selected randomly, and never learned any information about their partners apart from which group they belonged to.

Despite the fact that these groups were formed randomly, the participants showed the same favoritism for those in the same group that they showed for those with the same race in the first experiment. In many cases they weren't even aware that they showed this favoritism.

What do we learn from this experiment? I think it has powerful implications concerning the nature of human existence. We underestimate the importance of our group memberships in our everyday lives. This experiment shows that we cannot help but identify ourselves as members of certain groups, regardless of whether or not the organization of those groups shows any sense of logic or reason. It is an interesting (and somewhat frightening) prospect.

So in the context of this experiment, I suppose it becomes perfectly natural to root for a team that represents your school or the area where you grew up. If you really think about it, those players really have very little in common with us fans (I've never seen a burly offensive lineman squeeze into a chair at one of my physics lectures), but I suppose I'm just looking for a reason to root for someone. Any reason will do.

That seems fine to me.

Tuesday, September 8, 2009

Predictions

The following will all happen within my lifetime:

Also, I've mentioned Glenn Beck in each of the last two posts (twice in this one if you include the monkey bit). I promise to try not to in the next post.

- The word "whom" will cease to exist, as will the spelling of the word "through" (to be replaced by "thru").

- The words "they", "them" and "their" will officially be recognized as gender-neutral singular third-person pronouns (ridding us of awkward phrases like "he or she" and "his or her").

- Theoretical Physicists will finally discover the ultimate theory of everything. Knowing their work to be over, they will attempt to save their jobs by withholding the final draft and publishing false theories in order to fool governments into rewarding more grant money.

- A monkey sitting at a typewriter will write a bestseller.

- Glenn Beck and Rush Limbaugh will both be admitted into mental institutions after being diagnosed with a rare neurological disorder characterized by an inability to discern one's mouth from one's rectum.

- After a series of major advances in robotics, robots will take over the work of many occupations, including assembly line work, patient care and retail. Unemployment will skyrocket.

- After a series of major advances in artificial intelligence, robots will gain the ability to design and build other robots. Unemployment will plummet as leagues of unemployed are drafted into the military to battle the robot armies. Will Smith, Christian Bale, and Keanu Reeves will each heroically attempt to save the human race from the brutal robot overlords. They will all fail.

Also, I've mentioned Glenn Beck in each of the last two posts (twice in this one if you include the monkey bit). I promise to try not to in the next post.

Wednesday, August 19, 2009

Critical Thinking

I recently came across something in a community college textbook that I found interesting. About three whole pages of this textbook was devoted to giving guidelines for intelligently reading articles of academic interest. I suppose I shouldn't be too surprised, since this is a very important skill to have for those of us in academic fields. However, I don't think there was anything included that any intelligent person shouldn't be able to figure out for himself. Here's roughly what it said:

But when it comes to our roles in mainstream society, there's no reason not to apply these skills to the best of our ability. Take politics, for example:

Do you think Sarah Palin is an expert in health care? What qualifications does she have to decide on issues that affect Americans, besides that time that she ruined McCain's chances of getting elected? How about Glenn Beck? What kind of pedigree is required to make up stuff on TV these days? Unless Glenn Beck is really Dr. Glenn Beck, Phd., it sounds like these two fail the critical thinking check number 1.

Saying that Obama wants to put your grandma to death is a pretty outlandish claim. So are claims that compare proposed health care reform to nazi eugenics. That's check number 2. Upon two failed checks, any sane person should be looking for number 3. Give me a quote from one of the bills (with a page number), and maybe I'll listen. Otherwise, I'd rather spend my time reading up on time cube or flat earth theory. At least those sets of meaningless blabber are moderately entertaining and don't influence the well-being of 47 million people.

Before I get down from my soapbox, I'd like to mention that I really wish I could find better examples from across the aisle. As much as I hate to say it, this isn't a problem with the Republican party, but more just politics in general.

Our political system is one in which "facts" are routinely carefully selected, spun, misinterpreted, or completely fabricated just to back up one's point of view. There isn't a politician alive who doesn't have ulterior motives. They will say whatever they can, just to improve the status of their party, or get a boost in their next campaign. That sounds an awful lot like check number four.

Here's something you can do- read up on the most common logical fallacies, and try to spot them next time you're watching cable news or a debate. Some are so prevalent, that they are named after political phrases that are used when they are committed (like "slippery slope"). Maybe a harder task is to spot an argument that doesn't contain a logical fallacy.

As for check number six, I think you'll agree with me that overgeneralization is not only common in politics, but is an accepted political strategy. For example, taking a thousand-page bill and calling it a "government take-over of health care" is certainly an overgeneralization.

None of these behaviors would be tolerated in any academic field. You wouldn't even tolerate it among your coworkers. Heck, you'd probably scold your kids for some of the same behaviors that are commonplace over on capital hill. And these are the people who are running the country. Go figure.

What's the most frustrating is the fact that this isn't just a big accident. These sorts of deceitful behaviors are nothing but politics-by-design.

Eh. Fuck it.

To be honest, I still don't see why this needs to be outlined in a textbook. After all, in the sciences, these are rules that researchers live or die by. These are things you pick up out of necessity. You either learn to apply them or are subject to ridicule by your peers.

When analyzing the claims that anyone is making, keep the following in mind:

1. Is the writer/speaker an expert in the subject on which he/she is talking about? If not, is there any reason you should trust what this person is saying? (I may be pretty picky, but when it comes to academic matters, an "expert" is someone with an advanced degree in the particular field- at least.)

2. Do the claims disagree with accepted knowledge or are outrageous for other reasons? Such claims are not necessarily false, but there has to be a reason that years of academic pursuit suggest otherwise. (This, usually along with #1, is a primary reason that you can immediately ignore crackpot theorists who make claims like "Quantum Mechanics is obviously wrong" without explaining why quantum mechanics predicts the result of every low-energy experiment ever performed.)

3. Does the writer/speaker provide evidence to support his/her claims? Does the evidence supplied hold up to the same scrutiny? (In academic papers, evidence is shown through the results of individual research, or through citing papers written by other researchers. Seriously- this should be the biggest no-brainer in this list.)

4. Could the writer/speaker have ulterior motives? There are many reasons that a person could make a certain claim, and the pursuit of truth is only one of them. The others include money, social status, political capital, embarrassment, and countless others. Don't be naive.

5. Does the argument contain logical fallacies? Here's a sample of a few:6. Does the claim seem too simple, given the complexity of the subject matter? If someone offers a one-sentence solution to an age-old problem, that usually means that the person ignored a few factors that contributed to the problem in the first place.

- Circular logic

- Correlation implies causation

- Sweeping generalizations

- Bandwagon

- Arguing from ignorance

- Appeals to authority

- Slippery slope

But when it comes to our roles in mainstream society, there's no reason not to apply these skills to the best of our ability. Take politics, for example:

Do you think Sarah Palin is an expert in health care? What qualifications does she have to decide on issues that affect Americans, besides that time that she ruined McCain's chances of getting elected? How about Glenn Beck? What kind of pedigree is required to make up stuff on TV these days? Unless Glenn Beck is really Dr. Glenn Beck, Phd., it sounds like these two fail the critical thinking check number 1.

Saying that Obama wants to put your grandma to death is a pretty outlandish claim. So are claims that compare proposed health care reform to nazi eugenics. That's check number 2. Upon two failed checks, any sane person should be looking for number 3. Give me a quote from one of the bills (with a page number), and maybe I'll listen. Otherwise, I'd rather spend my time reading up on time cube or flat earth theory. At least those sets of meaningless blabber are moderately entertaining and don't influence the well-being of 47 million people.

Before I get down from my soapbox, I'd like to mention that I really wish I could find better examples from across the aisle. As much as I hate to say it, this isn't a problem with the Republican party, but more just politics in general.

Our political system is one in which "facts" are routinely carefully selected, spun, misinterpreted, or completely fabricated just to back up one's point of view. There isn't a politician alive who doesn't have ulterior motives. They will say whatever they can, just to improve the status of their party, or get a boost in their next campaign. That sounds an awful lot like check number four.

Here's something you can do- read up on the most common logical fallacies, and try to spot them next time you're watching cable news or a debate. Some are so prevalent, that they are named after political phrases that are used when they are committed (like "slippery slope"). Maybe a harder task is to spot an argument that doesn't contain a logical fallacy.

As for check number six, I think you'll agree with me that overgeneralization is not only common in politics, but is an accepted political strategy. For example, taking a thousand-page bill and calling it a "government take-over of health care" is certainly an overgeneralization.

None of these behaviors would be tolerated in any academic field. You wouldn't even tolerate it among your coworkers. Heck, you'd probably scold your kids for some of the same behaviors that are commonplace over on capital hill. And these are the people who are running the country. Go figure.

What's the most frustrating is the fact that this isn't just a big accident. These sorts of deceitful behaviors are nothing but politics-by-design.

Eh. Fuck it.

Wednesday, July 29, 2009

Bored?

Here's something fun to do:

Browse the Flat Earth Society Forums and try to figure out which posters are actually serious. In my opinion, some of them must be serious, or else no one would have the energy to maintain that website. On the other hand, I can't believe that they can all believe that the earth is flat. I mean, some of those statements just defy too much reason for someone to actually believe in it. Then again, you just may be surprised.

By the way- I said to browse. Don't bother posting. If you think you have a chance at beating some of these people in a debate, you are quite mistaken. This isn't to say that they have good arguments or really a semblance of coherent thought. There are two specific reasons why you can't beat them in a debate, and here they are:

1. They don't listen to reason. Seriously. How else can you interpret their explanation for those NASA pictures that clearly show an Earth that is circular from all sides? Their answer- a conspiracy. Not only are the governments and scientific communities from all space-able nations involved, but so are satellite TV and GPS companies as well (they actually transmit signals via blimps and radio towers, since satellites are impossible). Those pictures taken from outer space are computer generated- 'cause everyone knows they had photoshop back in the '60s.

Some even claim that there are guards stationed along the ice sheet at the edge of the world to make sure people don't try to go over the edge. Somewhere along the way, you've got to realize that there's something not quite right in the brain with these people here.

2. They can always make up new rules to explain the discrepancies you point out.

Example- why can't you see over the horizon? Answer: Because light follows a curved path while on Earth.

Why does the sun set? Answer: Because the sun (and moon, which gives off its own light) are like spotlights- not isotropic light sources. They only shine on certain parts of the world at a time as they follow circular paths exactly 3000 miles above the surface of the Earth.

How do you explain the phases of the moon? Answer: There is another heavenly body, unknown to mainstream science, which is completely black and at times likes to obscure our view of the moon.

How do you explain gravity on Earth? Answer: There is no gravity on Earth. Instead, a "Dark Energy" continuously accelerates the Earth upward at 9.8 m/s^2. By the way, they do cede that other bodies in space have gravity, thus explaining the existence of tides (but not the fact that there are two tides a day!). As for why other bodies have gravity but not the Earth? Because the Earth is SPECIAL!

Why do distances in the southern hemisphere seem closer than what is suggested by Flat Earth geography? Answer: Remember how the GPS companies are involved in the conspiracy? GPS software intentionally sends planes in paths that make distances in the northern hemisphere seem longer than they really are.

If you come up with something else that's not right with Flat Earth theory, they'll just come up with some other new assumption that would explain the observation. If they can't come up with an explanation, they'll just give the "your a sheep who's been brainwashed by the mainstream scientific conspiracy COME ON PEOPLE WHY DON'T YOU OPEN YOUR EYES!!!" argument.

These two pieces of idiot behavior are a constant among all crackpot pseudo-scientific theories, including null science, autodynamics, intelligent design, and countless others.

I've also observed it among most ardent followers of every religious and political area of thought. Just an observation...

I said most, so don't anyone get mad at me.

Browse the Flat Earth Society Forums and try to figure out which posters are actually serious. In my opinion, some of them must be serious, or else no one would have the energy to maintain that website. On the other hand, I can't believe that they can all believe that the earth is flat. I mean, some of those statements just defy too much reason for someone to actually believe in it. Then again, you just may be surprised.

By the way- I said to browse. Don't bother posting. If you think you have a chance at beating some of these people in a debate, you are quite mistaken. This isn't to say that they have good arguments or really a semblance of coherent thought. There are two specific reasons why you can't beat them in a debate, and here they are:

1. They don't listen to reason. Seriously. How else can you interpret their explanation for those NASA pictures that clearly show an Earth that is circular from all sides? Their answer- a conspiracy. Not only are the governments and scientific communities from all space-able nations involved, but so are satellite TV and GPS companies as well (they actually transmit signals via blimps and radio towers, since satellites are impossible). Those pictures taken from outer space are computer generated- 'cause everyone knows they had photoshop back in the '60s.

Some even claim that there are guards stationed along the ice sheet at the edge of the world to make sure people don't try to go over the edge. Somewhere along the way, you've got to realize that there's something not quite right in the brain with these people here.

2. They can always make up new rules to explain the discrepancies you point out.

Example- why can't you see over the horizon? Answer: Because light follows a curved path while on Earth.

Why does the sun set? Answer: Because the sun (and moon, which gives off its own light) are like spotlights- not isotropic light sources. They only shine on certain parts of the world at a time as they follow circular paths exactly 3000 miles above the surface of the Earth.

How do you explain the phases of the moon? Answer: There is another heavenly body, unknown to mainstream science, which is completely black and at times likes to obscure our view of the moon.

How do you explain gravity on Earth? Answer: There is no gravity on Earth. Instead, a "Dark Energy" continuously accelerates the Earth upward at 9.8 m/s^2. By the way, they do cede that other bodies in space have gravity, thus explaining the existence of tides (but not the fact that there are two tides a day!). As for why other bodies have gravity but not the Earth? Because the Earth is SPECIAL!

Why do distances in the southern hemisphere seem closer than what is suggested by Flat Earth geography? Answer: Remember how the GPS companies are involved in the conspiracy? GPS software intentionally sends planes in paths that make distances in the northern hemisphere seem longer than they really are.

If you come up with something else that's not right with Flat Earth theory, they'll just come up with some other new assumption that would explain the observation. If they can't come up with an explanation, they'll just give the "your a sheep who's been brainwashed by the mainstream scientific conspiracy COME ON PEOPLE WHY DON'T YOU OPEN YOUR EYES!!!" argument.

These two pieces of idiot behavior are a constant among all crackpot pseudo-scientific theories, including null science, autodynamics, intelligent design, and countless others.

I've also observed it among most ardent followers of every religious and political area of thought. Just an observation...

I said most, so don't anyone get mad at me.

Saturday, July 25, 2009

Advertising

--Here's a classic:

"Did you know that 9 out of 10 people need a new mattress?"

Really? What do they sleep on? Two-by-fours? Piles of hay? I didn't think the economy had gotten that bad!

Seriously- what's the criteria for needing a new mattress? I'm pretty sure I do, but that's just because I'm moving to a new apartment. Are 90% of Americans currently relocating? Did their homes just get repossessed?

--"Drivers who switched from Geico to Allstate saved an average of $473."

You think maybe the fact that they saved money had anything to do with the fact that they switched? How many people who switched actually lost money? I have a hunch that number is close to zero, meaning the people who wouldn't have saved weren't included in the sample size. The add may as well say, "Drivers who switched from Geico to Allstate and saved at least $400 saved an average of $473!"

--"Getting the right coverage isn't just about the car, it's about who's in the back seat."

Apparently, car insurance can prevent your kids from getting hurt in a car accident. It's like magic! Oh, wait. No. They just cut you a check and then raise your premiums. Sorry. You'll have to find a witch doctor or something.

--I'm a little tired of fast food commercials where fast food chains try to tell you why their fast food is better than other fast food.

Going to a fast food chain usually isn't one of the best moments of my life. Those moments aren't exactly a good time for brand loyalty. I can't imagine how bad your life has to be in order for you to be particular about your fast food. I just know that the decision of which chain to visit is usually dependent on which one is closest. Then comes the self-hate.

--Around in this area there is a college that airs commercials called 4-D College. First of all, I don't know anything about this college apart from what's on the commercials. Despite this fact, it may very well be a good place to study, but I'm not convinced. So, what does 4-D stand for? No, it's not the average report card of their top students. 4-D stands for the following:

1: Determination

2: Desire

3: Drive

4: Deliver

What? Now, I'm not sure where they make the rules for these mnemonic-driven bullet-point list things, but I'm pretty sure you're not allowed to start it with three nouns and end it with a verb. Plus, the first three are close enough to synonyms to discount the whole list in the first place. Once again, this college may very well be perfectly sufficient in preparing its students for the workplace, but if the commercial is indicative of the education you'll get there...

What I'm just saying is it's usually a good idea to put your best foot forward. And hopefully you've got a good one to show.

"Did you know that 9 out of 10 people need a new mattress?"

Really? What do they sleep on? Two-by-fours? Piles of hay? I didn't think the economy had gotten that bad!

Seriously- what's the criteria for needing a new mattress? I'm pretty sure I do, but that's just because I'm moving to a new apartment. Are 90% of Americans currently relocating? Did their homes just get repossessed?

--"Drivers who switched from Geico to Allstate saved an average of $473."

You think maybe the fact that they saved money had anything to do with the fact that they switched? How many people who switched actually lost money? I have a hunch that number is close to zero, meaning the people who wouldn't have saved weren't included in the sample size. The add may as well say, "Drivers who switched from Geico to Allstate and saved at least $400 saved an average of $473!"

--"Getting the right coverage isn't just about the car, it's about who's in the back seat."

Apparently, car insurance can prevent your kids from getting hurt in a car accident. It's like magic! Oh, wait. No. They just cut you a check and then raise your premiums. Sorry. You'll have to find a witch doctor or something.

--I'm a little tired of fast food commercials where fast food chains try to tell you why their fast food is better than other fast food.

Going to a fast food chain usually isn't one of the best moments of my life. Those moments aren't exactly a good time for brand loyalty. I can't imagine how bad your life has to be in order for you to be particular about your fast food. I just know that the decision of which chain to visit is usually dependent on which one is closest. Then comes the self-hate.

--Around in this area there is a college that airs commercials called 4-D College. First of all, I don't know anything about this college apart from what's on the commercials. Despite this fact, it may very well be a good place to study, but I'm not convinced. So, what does 4-D stand for? No, it's not the average report card of their top students. 4-D stands for the following:

1: Determination

2: Desire

3: Drive

4: Deliver

What? Now, I'm not sure where they make the rules for these mnemonic-driven bullet-point list things, but I'm pretty sure you're not allowed to start it with three nouns and end it with a verb. Plus, the first three are close enough to synonyms to discount the whole list in the first place. Once again, this college may very well be perfectly sufficient in preparing its students for the workplace, but if the commercial is indicative of the education you'll get there...

What I'm just saying is it's usually a good idea to put your best foot forward. And hopefully you've got a good one to show.

Sunday, July 19, 2009

Heisenberg Uncertainty

One of the things that just about everyone knows about quantum mechanics is that it is a theory that only predicts probabilities. In other words, even if you know everything about a particle there is to know, you still may not be able to say where it is. The only thing quantum mechanics can tell you is the probability of detecting the particle in any given location. This fact does not have a famous name, but is often referred to as the indeterminacy of quantum mechanics. What it is not called, however, is the Heisenberg uncertainty principle. I've heard everyone from John Stewart to cult-recruitment movies get this little bit of terminology wrong. This post is about what the Heisenberg uncertainty principle actually is.

The Heisenberg uncertainty principle is something much more specific, and much more interesting. It is a piece of the weirdness of quantum mechanics all wrapped up in simple mathematics. In case you are wondering why I never mentioned it in the definition of quantum mechanics that I wrote in the previous post, the answer is that I didn't have to. The Heisenberg uncertainty principle can be derived explicitly from what was written there. Thus, any evidence that violates this principle in turn violates all of quantum mechanics. Luckily (or unluckily), no one has ever found any such evidence (despite the efforts of many, with none other than Albert Einstein at the head).

So what does the Heisenberg uncertainty principle say?

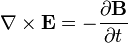

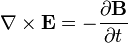

Well, in quantum mechanics, there are many observable quantities, like position, momentum, angular momentum, energy, etc. The Heisenberg uncertainty principle states that certain pairs of observable quantities are incompatible, which means that it is impossible to know both quantities of a particular particle simultaneously to a certain level of certainty. There are many such incompatible pairs, the most famous of which is position and momentum. Other pairs include time and energy, and orthogonal components of angular momentum. The position-momentum uncertainty principle is mathematically represented like this:

Heisenberg showed evidence for this principle by asking what would happen if one were to try and measure either of these quantities. For example, imagine you have a particle inside a box, and you wish to measure its precise location.

So, to find the location of the particle, you might open a window and shine a light inside, and then study the light that is scattered off of the particle. In this way, you can know where the particle was at the instant you shined the light on it to arbitrary accuracy. However, the light you shine on the particle, by scattering off of it, can impart a wide range of possible momentum into it. As a matter of fact, if you would like to decrease the uncertainty behind your position measurement, you would have to use light of shorter wavelength, which has higher momentum and would produce a wider spread in the particle's resulting momentum distribution (by the way, to those of you who are familiar with the collapse of the wave function, this is one illustration of how it could actually happen- no sentient beings necessarily involved).

Measurements that would determine the momentum of a particle would similarly produce spreads in the position distribution in very real and concrete ways.

However, some would say that this argument is not entirely satisfactory, since it only shows how the position and momentum of a particle cannot both be known to arbitrary certainty. The Copenhagen interpretation insists that these values cannot even exist simultaneously. To even guess at the values would be in violation of the laws of physics.

In other words, a particle with perfectly defined position has momentum in all magnitudes simultaneously. A particle with perfectly defined momentum exists in all places in the universe.

However, another way to look at things might make this principle seem completely ordinary. In quantum theory, all particles are described by wave functions, not points. A particle's position is described by the position distribution of its wave function. The particle's momentum is described by the frequency distribution of the wave function.

Therefore, a particle with perfectly-defined position has a wave function that is a single spike- in mathematical terms, a Dirac delta function. A delta function has a frequency distribution that stretches to infinity in both directions, meaning that the momentum would have no definition at all.

On the other side, a particle with perfectly defined momentum would have a wave function that is an infinitely long sine wave. This function gives a spike in the frequency distribution, but extends to both sides of infinity in position-space.

This argument makes perfect mathematical sense (at least if you've taken a course in Fourier analysis). However, it is only valid if you assume that the wave function describes the entire state of the particle. Hidden variable theories claim that there is another piece to the puzzle- therefore, to prove the existence of a hidden variable, one would just have to show a situation with Heisenberg uncertainty violation. (Once again, Einstein himself tried and failed. Do you think you've got a shot?)

So, for those of you who are not yet entirely clear on this whole thing, lets look at what I think is the simplest example- spin states.

So, as you may know, certain particles like electrons and protons are called spin-1/2 particles. You may have heard that these particles have two spin states, commonly called spin-up and spin-down. Well, this picture omits a few details, so let's start over.

So, spin is a vector quantity that describes the innate angular momentum of certain particles. The fact that it is a vector quantity means that it has three components which we'll call the x-, y-, and z- components. What's special about spin is that for any particle, the magnitude of this vector is a constant, though each of the components is not.

Another interesting thing about spin is the fact that for spin-1/2 particles, there are exactly two stationary states corresponding to each spin component. So, for the z-component of spin, there are two stationary states, commonly called spin-up and spin-down. Likewise, looking at the x-component of spin, there are two different stationary states, which we'll agree to call spin-right and spin-left- for sake of the argument I'll present in a minute.

Now here's where things get interesting. It turns out that the three components of spin are incompatible in the Heisenberg sense. Therefore, if you know that an electron is in a spin-up state, the x- and y- components necessarily are undefined.

Imagine that you're on a plane, and you ask the flight attendant which direction you happen to be flying. She says, "We're headed in the eastern direction. As to whether we're headed north-east or south-east is undefined".

Bewildered, you ask the flight attendant if she could go to the cockpit and confer with the pilot whether they are headed north or south. The flight attendant returns, and says, "We're headed north, but now we don't know if we're headed north-east or north-west".

Now, let's imagine we're in a physics lab with an electron in a box. We measure the z-component of spin of this electron (let's not worry about how), and measure it to be in the spin-up state. Heisenberg comes by and says, "Now that the z-component is defined, the x-component is undefined and therefore has no value".

You say, "Poppycock! The x-component must be defined, or none of this makes sense! Why can't I just measure the x-component and find its value?"

So, you do the measurement along the x-axis, and now find that it is in the spin-right state.

You grin and exclaim, "Heisenberg, you're a fraud! This electron is spin-up and spin-right, thereby invalidating your uncertainty principle!"

Heisenberg responds, "Well, the particle was spin-up until you measured the spin along the x-axis. Now that the x-component is defined, the z-component is no longer. By making the second measurement, you caused the wave-function to collapse, thereby invalidating the first measurement."

You say, "Well, I never understood the wave-function collapse thing anyway. You'll have to provide another argument."

"Well, why don't you just measure the z-component once again?"

At this point two things could happen:

1: There is a 50% chance that you measure the particle to be spin-up again, in which case, you grin at Heisenberg until he convinces you to flip the coin again by measuring the x-component once more.

2: There is a 50% chance that the particle will now be spin-down. Now there's egg all over your face, since it is clear that the particle ceased to be spin-up as soon as you measured it to be spin-right. Otherwise, subsequent measurements of the z-component would always reveal it to be spin-up.

There's still one little caveat in this Heisenberg uncertainty business. That is, we still haven't really established what the cause of all this observation is. On the one hand, it could be an innate property of the particles involved. A particle known to be in a specific location just doesn't have a well-defined momentum. On the other hand, it could be a product of the effects of measurement. Strange mathematical coincidences regarding wave-function collapse make it impossible for the momentum to be known, but it may nevertheless exist. These two interpretations happen to be represented by two sides of the old quantum mechanics debate. On the one side is Niels Bohr with the Copenhagen interpretation- on the other, Albert Einstein and the hidden variables approach. Maybe I'll write more on that if this little girl in my lap will let me.

Maybe.

The Heisenberg uncertainty principle is something much more specific, and much more interesting. It is a piece of the weirdness of quantum mechanics all wrapped up in simple mathematics. In case you are wondering why I never mentioned it in the definition of quantum mechanics that I wrote in the previous post, the answer is that I didn't have to. The Heisenberg uncertainty principle can be derived explicitly from what was written there. Thus, any evidence that violates this principle in turn violates all of quantum mechanics. Luckily (or unluckily), no one has ever found any such evidence (despite the efforts of many, with none other than Albert Einstein at the head).

So what does the Heisenberg uncertainty principle say?

Well, in quantum mechanics, there are many observable quantities, like position, momentum, angular momentum, energy, etc. The Heisenberg uncertainty principle states that certain pairs of observable quantities are incompatible, which means that it is impossible to know both quantities of a particular particle simultaneously to a certain level of certainty. There are many such incompatible pairs, the most famous of which is position and momentum. Other pairs include time and energy, and orthogonal components of angular momentum. The position-momentum uncertainty principle is mathematically represented like this:

Heisenberg showed evidence for this principle by asking what would happen if one were to try and measure either of these quantities. For example, imagine you have a particle inside a box, and you wish to measure its precise location.

So, to find the location of the particle, you might open a window and shine a light inside, and then study the light that is scattered off of the particle. In this way, you can know where the particle was at the instant you shined the light on it to arbitrary accuracy. However, the light you shine on the particle, by scattering off of it, can impart a wide range of possible momentum into it. As a matter of fact, if you would like to decrease the uncertainty behind your position measurement, you would have to use light of shorter wavelength, which has higher momentum and would produce a wider spread in the particle's resulting momentum distribution (by the way, to those of you who are familiar with the collapse of the wave function, this is one illustration of how it could actually happen- no sentient beings necessarily involved).

Measurements that would determine the momentum of a particle would similarly produce spreads in the position distribution in very real and concrete ways.

However, some would say that this argument is not entirely satisfactory, since it only shows how the position and momentum of a particle cannot both be known to arbitrary certainty. The Copenhagen interpretation insists that these values cannot even exist simultaneously. To even guess at the values would be in violation of the laws of physics.

In other words, a particle with perfectly defined position has momentum in all magnitudes simultaneously. A particle with perfectly defined momentum exists in all places in the universe.

However, another way to look at things might make this principle seem completely ordinary. In quantum theory, all particles are described by wave functions, not points. A particle's position is described by the position distribution of its wave function. The particle's momentum is described by the frequency distribution of the wave function.

Therefore, a particle with perfectly-defined position has a wave function that is a single spike- in mathematical terms, a Dirac delta function. A delta function has a frequency distribution that stretches to infinity in both directions, meaning that the momentum would have no definition at all.

On the other side, a particle with perfectly defined momentum would have a wave function that is an infinitely long sine wave. This function gives a spike in the frequency distribution, but extends to both sides of infinity in position-space.

This argument makes perfect mathematical sense (at least if you've taken a course in Fourier analysis). However, it is only valid if you assume that the wave function describes the entire state of the particle. Hidden variable theories claim that there is another piece to the puzzle- therefore, to prove the existence of a hidden variable, one would just have to show a situation with Heisenberg uncertainty violation. (Once again, Einstein himself tried and failed. Do you think you've got a shot?)

So, for those of you who are not yet entirely clear on this whole thing, lets look at what I think is the simplest example- spin states.

So, as you may know, certain particles like electrons and protons are called spin-1/2 particles. You may have heard that these particles have two spin states, commonly called spin-up and spin-down. Well, this picture omits a few details, so let's start over.

So, spin is a vector quantity that describes the innate angular momentum of certain particles. The fact that it is a vector quantity means that it has three components which we'll call the x-, y-, and z- components. What's special about spin is that for any particle, the magnitude of this vector is a constant, though each of the components is not.

Another interesting thing about spin is the fact that for spin-1/2 particles, there are exactly two stationary states corresponding to each spin component. So, for the z-component of spin, there are two stationary states, commonly called spin-up and spin-down. Likewise, looking at the x-component of spin, there are two different stationary states, which we'll agree to call spin-right and spin-left- for sake of the argument I'll present in a minute.

Now here's where things get interesting. It turns out that the three components of spin are incompatible in the Heisenberg sense. Therefore, if you know that an electron is in a spin-up state, the x- and y- components necessarily are undefined.

Imagine that you're on a plane, and you ask the flight attendant which direction you happen to be flying. She says, "We're headed in the eastern direction. As to whether we're headed north-east or south-east is undefined".

Bewildered, you ask the flight attendant if she could go to the cockpit and confer with the pilot whether they are headed north or south. The flight attendant returns, and says, "We're headed north, but now we don't know if we're headed north-east or north-west".

Now, let's imagine we're in a physics lab with an electron in a box. We measure the z-component of spin of this electron (let's not worry about how), and measure it to be in the spin-up state. Heisenberg comes by and says, "Now that the z-component is defined, the x-component is undefined and therefore has no value".

You say, "Poppycock! The x-component must be defined, or none of this makes sense! Why can't I just measure the x-component and find its value?"

So, you do the measurement along the x-axis, and now find that it is in the spin-right state.

You grin and exclaim, "Heisenberg, you're a fraud! This electron is spin-up and spin-right, thereby invalidating your uncertainty principle!"

Heisenberg responds, "Well, the particle was spin-up until you measured the spin along the x-axis. Now that the x-component is defined, the z-component is no longer. By making the second measurement, you caused the wave-function to collapse, thereby invalidating the first measurement."

You say, "Well, I never understood the wave-function collapse thing anyway. You'll have to provide another argument."

"Well, why don't you just measure the z-component once again?"

At this point two things could happen:

1: There is a 50% chance that you measure the particle to be spin-up again, in which case, you grin at Heisenberg until he convinces you to flip the coin again by measuring the x-component once more.

2: There is a 50% chance that the particle will now be spin-down. Now there's egg all over your face, since it is clear that the particle ceased to be spin-up as soon as you measured it to be spin-right. Otherwise, subsequent measurements of the z-component would always reveal it to be spin-up.

There's still one little caveat in this Heisenberg uncertainty business. That is, we still haven't really established what the cause of all this observation is. On the one hand, it could be an innate property of the particles involved. A particle known to be in a specific location just doesn't have a well-defined momentum. On the other hand, it could be a product of the effects of measurement. Strange mathematical coincidences regarding wave-function collapse make it impossible for the momentum to be known, but it may nevertheless exist. These two interpretations happen to be represented by two sides of the old quantum mechanics debate. On the one side is Niels Bohr with the Copenhagen interpretation- on the other, Albert Einstein and the hidden variables approach. Maybe I'll write more on that if this little girl in my lap will let me.

Maybe.

Wednesday, July 8, 2009

Quantum Mechanics

Quantum Mechanics is one of the most popular yet misunderstood physics topics out there. There are many myths around quantum mechanics that I run into from time to time, and I thought I'd devote some posts to the topic.

Perhaps the biggest myth surrounding quantum mechanics is the idea that it doesn't make sense. This idea is absurd. Quantum mechanics describes how our world works- if it doesn't make sense, then you just don't understand it. Or at least you haven't thought about it in the right way.

Quantum mechanics is baffling yet incredibly simple. You can literally write down all of quantum mechanics on a half-sheet of paper. As a matter of fact, here it is:

- The state of a system is entirely represented by its wave function, which is a unit vector of any number of dimensions (including infinite) existing in Hilbert space. The wave function can be calculated from the Schrodinger equation:

- The expectation value (in a statistical sense) of an observable quantity is the inner product of the wave function with the wave function after being operated on by the observable's hermitian operator.

- Determinate States, or states of a system that correspond to a constant observed value, are eigenstates of the observable's hermitian operator, while the observed value is the eigenvalue. (ex. energy levels that give rise to discrete atomic spectra are eigenvalues corresponding to energy determinate states.)

- All determinate states are orthogonal and all possible states can be expressed as a linear combination of determinate states.

- When a measurement is made, the probability of getting a certain value is the square root of the inner product of that value's determinate state with the wave function.

- Upon measurement, the wave function "collapses", becoming the determinate state corresponding to the value that was measured.

So how bad was that?

Okay, so this is probably confusing to those of you who haven't had a class in quantum mechanics or an advanced course in linear algebra. Getting passed the math, it really isn't that hard conceptually. The important thing to note, though, is the fact that it can be defined so concisely. I think I've actually included more than necessary, so it probably can be even more concise than what I've written. Quantum mechanics is pretty complex in application, but is simple at its core. All of the best theories have this quality.

I'll probably post some stuff later that will (hopefully) clear up some of the details.

Another myth I run into is around the term "Quantum Physicist". There isn't such thing- at least in the professional sense. The reason why is the fact that there isn't a physicist in the world who doesn't use quantum mechanics. If the term "Quantum Physicist" represents a scientist who uses quantum mechanics in his/her research, we can probably just agree to just use the term "Physicist". Likewise, it is impossible to go to college and major in "Quantum Physics". Any respectable university would require its physics majors to learn quantum mechanics, so there is no reason to create a new major around it. I'm saying this in reference to the nerdy characters in movies and TV shows who are described using these terms. If you know anyone who writes screenplays, let them know.

I was going to get into some of the misunderstandings around actual quantum mechanics, but maybe I'll get into it later. Most of these misunderstandings involve the indeterminacy of the statistical interpretation, the Heisenberg uncertainty principle, and the collapse of the wave function. I'll try to get to all these issues later. For now, I'm hungry.

Perhaps the biggest myth surrounding quantum mechanics is the idea that it doesn't make sense. This idea is absurd. Quantum mechanics describes how our world works- if it doesn't make sense, then you just don't understand it. Or at least you haven't thought about it in the right way.

Quantum mechanics is baffling yet incredibly simple. You can literally write down all of quantum mechanics on a half-sheet of paper. As a matter of fact, here it is:

- The state of a system is entirely represented by its wave function, which is a unit vector of any number of dimensions (including infinite) existing in Hilbert space. The wave function can be calculated from the Schrodinger equation:

- The expectation value (in a statistical sense) of an observable quantity is the inner product of the wave function with the wave function after being operated on by the observable's hermitian operator.

- Determinate States, or states of a system that correspond to a constant observed value, are eigenstates of the observable's hermitian operator, while the observed value is the eigenvalue. (ex. energy levels that give rise to discrete atomic spectra are eigenvalues corresponding to energy determinate states.)

- All determinate states are orthogonal and all possible states can be expressed as a linear combination of determinate states.

- When a measurement is made, the probability of getting a certain value is the square root of the inner product of that value's determinate state with the wave function.

- Upon measurement, the wave function "collapses", becoming the determinate state corresponding to the value that was measured.

So how bad was that?

Okay, so this is probably confusing to those of you who haven't had a class in quantum mechanics or an advanced course in linear algebra. Getting passed the math, it really isn't that hard conceptually. The important thing to note, though, is the fact that it can be defined so concisely. I think I've actually included more than necessary, so it probably can be even more concise than what I've written. Quantum mechanics is pretty complex in application, but is simple at its core. All of the best theories have this quality.

I'll probably post some stuff later that will (hopefully) clear up some of the details.

Another myth I run into is around the term "Quantum Physicist". There isn't such thing- at least in the professional sense. The reason why is the fact that there isn't a physicist in the world who doesn't use quantum mechanics. If the term "Quantum Physicist" represents a scientist who uses quantum mechanics in his/her research, we can probably just agree to just use the term "Physicist". Likewise, it is impossible to go to college and major in "Quantum Physics". Any respectable university would require its physics majors to learn quantum mechanics, so there is no reason to create a new major around it. I'm saying this in reference to the nerdy characters in movies and TV shows who are described using these terms. If you know anyone who writes screenplays, let them know.

I was going to get into some of the misunderstandings around actual quantum mechanics, but maybe I'll get into it later. Most of these misunderstandings involve the indeterminacy of the statistical interpretation, the Heisenberg uncertainty principle, and the collapse of the wave function. I'll try to get to all these issues later. For now, I'm hungry.

Tuesday, June 30, 2009

Cartoons

My eight year old brother-in-law's 'Spongebob Squarepants' watching has made me nostalgic for old cartoons of my childhood. If only I could find a single episode of 'loony tunes' on TV somewhere.

But now that 'loony tunes' is off the tube, there are some questions that I find myself asking- like "where are kids these days going to get the introduction to classical music that I had?" 'Loony Tunes' was how kids of my generation got to hear great pieces like Tchaikovsky's "Romeo and Juliet" and Wagner's "Ride of the Valkyrie". Of course, I learned them under the titles "Shot-by-Cupid's-arrow Theme" and "Kill the Wabbit", but I learned them nonetheless. When I just got started listening to classical music, it was nice to hear something familiar. Kids these days don't even recognize these pieces.

Of course, 'loony tunes' is considered by today's standards to be too 'violent'. But to put things in perspective, lets look at a cartoon that is readily watched by kids on TV today called Pokemon.

So, the basic idea behind Pokemon is that a bunch of kids go romping through the woods in search of certain creatures. When they find a creature they want, they beat it up, and trap it inside a tiny ball- where it will stay night and day for just about the remainder of its life. In fact, the only time these creatures are allowed to come out is when they are forced to battle each other for the praise of their owners.

Wow. That sounds like an activity that Micheal Vick would find enjoyable. I'm surprised PETA isn't more involved.

Okay, so maybe my point isn't that clear, but here's what I think: Let's not get into a tizzy fit over what kids watch on TV. Seeing some cartoonish violence isn't nearly as harmful as the effects of being ignored and inactive for long periods of time. Give them attention and something to do with their time, and your kids'll be just fine.

But now that 'loony tunes' is off the tube, there are some questions that I find myself asking- like "where are kids these days going to get the introduction to classical music that I had?" 'Loony Tunes' was how kids of my generation got to hear great pieces like Tchaikovsky's "Romeo and Juliet" and Wagner's "Ride of the Valkyrie". Of course, I learned them under the titles "Shot-by-Cupid's-arrow Theme" and "Kill the Wabbit", but I learned them nonetheless. When I just got started listening to classical music, it was nice to hear something familiar. Kids these days don't even recognize these pieces.

Of course, 'loony tunes' is considered by today's standards to be too 'violent'. But to put things in perspective, lets look at a cartoon that is readily watched by kids on TV today called Pokemon.

So, the basic idea behind Pokemon is that a bunch of kids go romping through the woods in search of certain creatures. When they find a creature they want, they beat it up, and trap it inside a tiny ball- where it will stay night and day for just about the remainder of its life. In fact, the only time these creatures are allowed to come out is when they are forced to battle each other for the praise of their owners.

Wow. That sounds like an activity that Micheal Vick would find enjoyable. I'm surprised PETA isn't more involved.

Okay, so maybe my point isn't that clear, but here's what I think: Let's not get into a tizzy fit over what kids watch on TV. Seeing some cartoonish violence isn't nearly as harmful as the effects of being ignored and inactive for long periods of time. Give them attention and something to do with their time, and your kids'll be just fine.

Tuesday, June 16, 2009

'Fatherhood'

The next time you know someone who just had his first baby and you want to ask a question like, "so how does it feel to be a father?"- just wait a little while. Wait until after the first few sleepless nights and diaper changes. Maybe he'll have a better idea by then.